Imagine that our Agent has played several such episodes. The core of the Cross-Entropy method is to throw away bad episodes and train on better ones, so how we find the better ones?. Every episode is a sequence of observations of states that the Agent has got from the Environment, actions it has issued, and rewards for these actions. The way we’re going to do this is considering the problem as a supervised learning problem where observed states are considered the features (input data) and the actions constitute the labels.ĭuring the agent’s lifetime, its experience is presented as episodes. Since we will consider a neural network as the heart of this first Agent, we need to find some way to obtain data that we can assimilate as a training dataset, which includes input data and their respective labels. This is the base of the Cross-Entropy method.

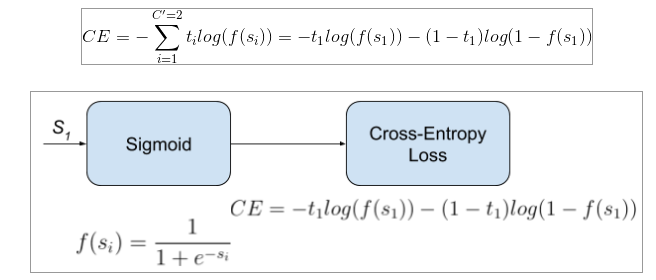

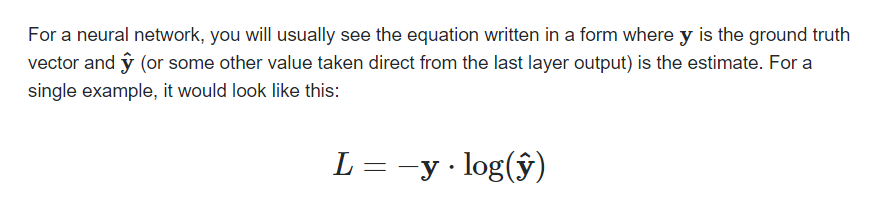

Then repeat this process in order to our policy gradually gets better. Then we improve our policy by playing a few episodes and then adjusting our policy (parameter of the neural network) in a way that it is more efficient. We want a policy, a probability distribution, and we initialize it at random. In this case we refer to it as a stochastic policy, because it returns a probability distribution over actions rather than returning a deterministic single action. In our case the output of our neural network is an action vector that represents a probability distribution as shown visually in the following Figure: In practice, the policy is usually represented as a probability distribution over actions (that the Agent can take at a given state), which makes it very similar to a classification problem presented before ( in the Deep Learning post), with the amount of classes being equal to the amount of actions we can carry out. In future posts, we will go in more deeply about this type of method. We refer to the methods that solve this type of problem as Policy-Based methods that train the neural network that produces the policy. In this post, we will consider that the core of our Agent will be a neural network that produces the policy. Remember that a policy, denoted by □(□|□), says which action the Agent should take for every state observed. The Cross-Entropy MethodĬross-Entropy is considered an Evolutionary Algorithm: Some individuals are sampled from a population, and only the “elite” ones govern future generations’ characteristics.Įssentially, what the Cross-Entropy method does is take a bunch of inputs, see the outputs produced, choose the inputs that have led to the best outputs and tune the Agent till we are satisfied with the outputs we see. It is an evolutionary algorithm for parameterized policy optimization that John Schulman claims works “embarrassingly well” on complex RL problems. In this post we will start with Cross-Entropy method that will help to the reader to warm-up in merging Deep Learning and Reinforcement Learning. From this post onwards we will explore different methods to obtain a policy that allows an Agent to make decisions. For this purpouse the Agent employs a policy □, as a strategy to determine the next action a based on the current state s. In a previous posts we advanced that an Agent make decisions to solve complex decision-making problems under uncertainty. After a parenthesis of three posts introducing basics in Deep Learning and Pytorch, in this post we put the focus back to merge Reinforcement Learning and Deep Learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed